Running VSCode with venv

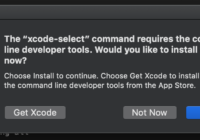

Running VSCode with venv In order to use venv from within VSCode, you need to set the path of venv in Settings. In order to run notebooks here, it needs to install the required packages: Just go to Show and Run Commands. Now you can see that your venv is available for selection. Now you… Continue reading »