Confluent Hub Client

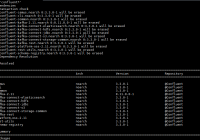

Confluent Hub Client Confluent Hub is an online repository for extensions and components for Kafka. Kafka is based on extensible model for many of its services. It allows plug-ins and extensions which makes it generic enough to be suitable for many real world streaming based applications. They include both Confluent and 3rd party components. Generally,… Continue reading »